[*images maybe holding images until release date]

We are specialists in the practical application of Artificial Intelligence including Voice and Sound Recognition AI, Machine Learning, Deep Learning and Data Science Software used within Engineering processes and operations

AI can be trained to accurately recognise specific sounds, whether it be precise process noises, or distinct human vocal emotions.

The power of sound and voice recognition

Being able to automatically recognise patterns, tones, frequencies, pitch and variabilities in sounds and voices is an extremely powerful tool in a wide range of businesses, operations, tasks and processes.

By learning from known and highly variable sound and voice characteristics including all the in-between highly variable and unpredictable acoustic attributes; sound and voice recognitions becomes more insightful, more meaningful, more accurate and much more beneficial when used for tasks and processes like: e.g. sound classification, automatic and rapid sound-based diagnosis, anomaly detection, quality control, computer hearing, behavioural analysis, investigations, self-driving vehicles, threat assessment, robotics, security, and responding to audible changes in any type of dynamic, moving environment, amongst many more cases.

The accuracy of sound and voice recognition is directly proportional to what is already known about certain sounds and voices (e.g. their full range of acoustic attributes): the more past voices and sounds to learn from the more accurate and informed we can conduct future voice and sound recognition.

Artificial Intelligence makes sound and voice recognition highly dynamic and incredibly accurate

Learning from past voices and sounds in order to recognise the same type of audible stimuli in the future might sound straightforward - it is of course exactly what a human brain does all the time - but making this process automatic and asking a machine to do this is extremely complex and demanding, mainly due to the huge amount of variability involved in acoustics: pitch, volume, frequency, amplitude, range, modulation etc - and these are variations that can happen for just one sound, let alone when you have numerous sounds in the same environment.

If a traditional software programming approach was used, the computer would continually be asking "if this, then learn that; if x and y, learn z". This stepwise methodology is extremely limited, inefficient and resource-heavy as the program will only do what it has explicitly been told to look for by the programmer; making it next to impossible to account for all possibilities and variations, even in the simplest of sounds. You certainly couldn't use this approach for complex and diverse scenarios e.g. multiple and variable voices and sounds in the same time or space.

With AI and Machine Learning technology it is now possible to learn from near infinite amounts of variable, constantly changing voice and sound data in order to carry out much more accurate sound recognition, automatically.

ELDR-I Sound is a powerful Deep Learning Voice and Sound Recognition package that can accurately and rapidly help recognise unlimited voices and sounds based on variable past and complex acoustic data it has learnt from

ELDR-I Sound is built around our powerful ELDR-I AI Engine, which is a Deep Learning Convolutional Neural Network. ELDR-I Sound uses Supervised Learning and Sound/Voice Classification to learn how to recognise all types of voices and sounds thrown at it.

When ELDR-I Sound has learnt (trained) from the data, it is then primed to receive current-status voice and sound data in real time, or from a recording from which to rapidly and accurately recognise/classify sounds within - in order to give a response - and that response can range from a simple classification to a "yes/no" to triggering sophisticated downstream events.

ELDR-I Sound is highly dynamic, autonomous, configurable, graphical and easily integrated

Dynamic

ELDR-I Sound can handle and learn from multiple sources, sizes and complexities of voice and sound data for numerous environments and requirements simultaneously. Data can be changed at any time and it can continually learn.

Autonomous

By default ELDR-I Sound is plug and play - you can simply give it appropriately formatted acoustic data and it will automatically learn from it, including self optimisation, self scaling and classification.

Configurable

In some cases you may be happy with plug and play, however almost everything in ELDR-I Sound is configurable; from labelling of images, to colours, displays, output format, learning modes, learning accuracy, all the way through to Convolutional Neural Network dynamics and dimensions.

Graphical

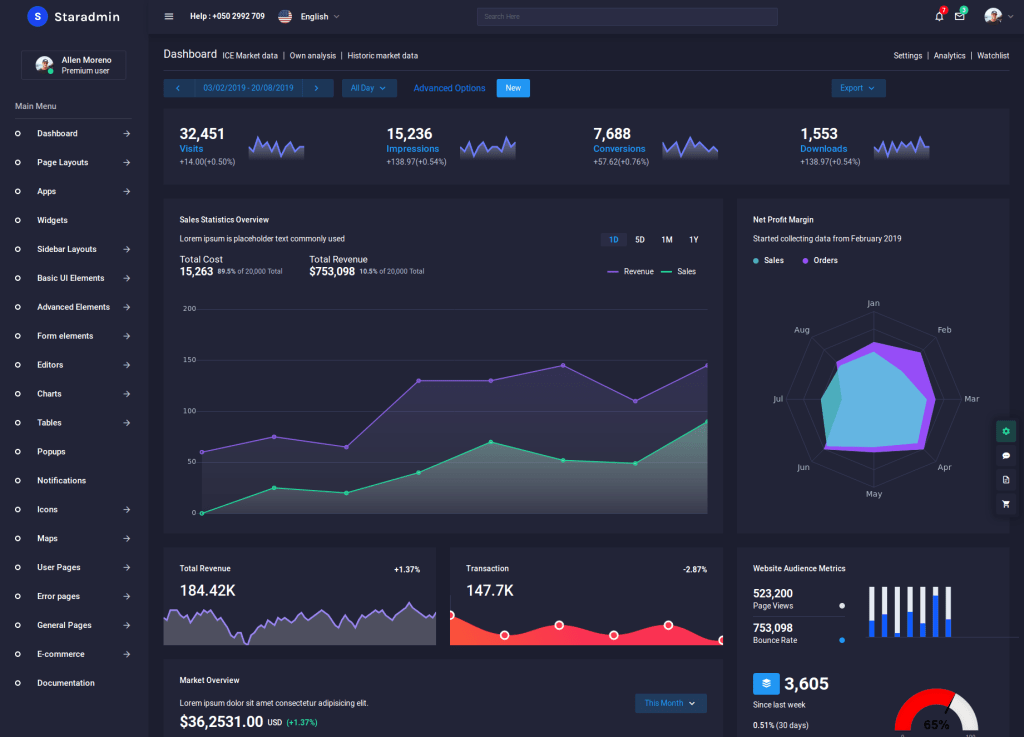

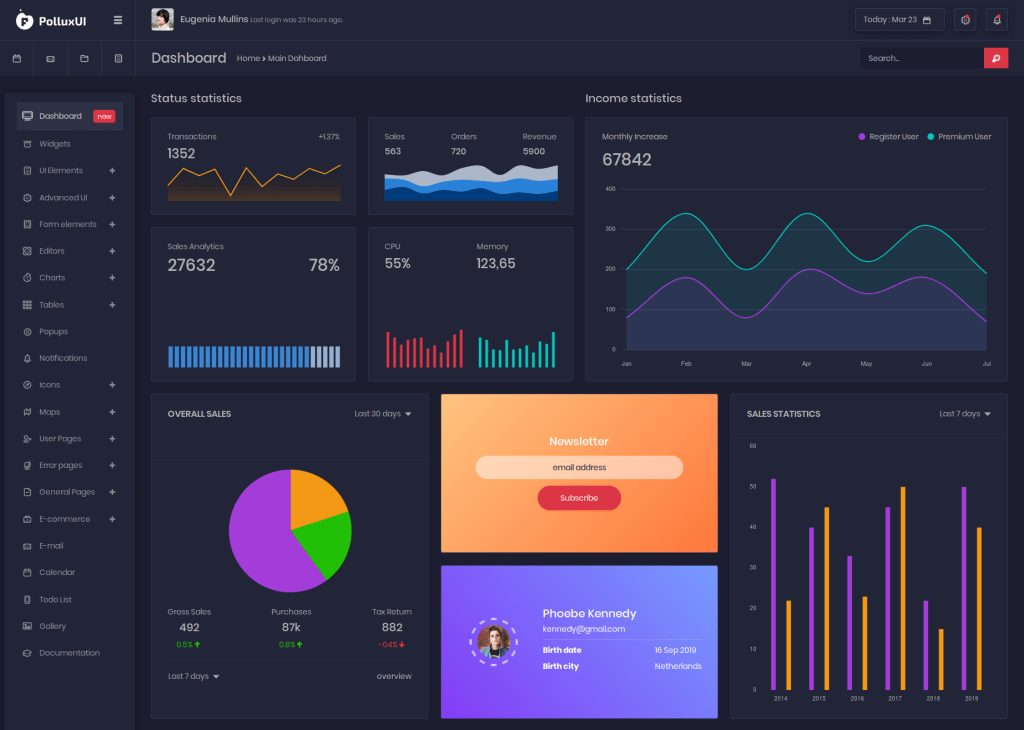

ELDR-I Sound uses a rich intuitive GUI Dashboard from which to manage the whole AI process (sound and voice data preparation, learning, outputting and testing), including a comprehensive suite of gamified charts and other visual displays to monitor everything.

Easily Integrated

AI Integration is our speciality. We understand that AI can be used in a variety of ways and in numerous system-types and processes. We build our software to be entirely modular and there are multiple integration methods and points ranging from network-based RESTful API integration to direct coupling at the code level, depending on the response time required, amongst other considerations.

Key points about our Voice and Sound Recognition AI software

- Can help recognise voices and sounds within live feeds or recordings to a high degree of accuracy, after learning from past acoustic data

- Can handle numerous data streams and outputs simultaneously

- Uses our powerful ELDR-I AI Deep Learning Convolutional Neural Network Engine

- Dynamic

- Autonomous

- Configurable

- Graphical

- Easily Integrated

Example areas where Fennaio can help with AI, Machine Learning and Deep Learning in the Engineering industry

AI can be used all over the Engineering industry, including these processes, operations and tasks:

- modelling

- analytics

- forecasting and predictions

- mechanical engineering

- aeronautical engineering

- electrical engineering

- electronic engineering

- chemical engineering

- process engineering

- management

- civil engineering

- general engineering

- design

- risk

- safety

- research

- planning

- process design

- process control

- process operations

- process economics

- process data analytics

- many more...